"In this world nothing can be said to be certain, except death and taxes," wrote the US statesman Benjamin Franklin in 1789, on the eve of the French Revolution. And his words are still true today – of that we can be certain. Everything else in life, however, remains very much uncertain. Be that as it may, uncertainty is something that people still find unsettling. They look anxiously for certainties that they will never find. This yearning for certainty is part of our emotional and cultural heritage. People look for solace in religion, astrology and fortune tellers, who offer certainties that do not actually exist.

Many of us today feel we are living in the most uncertain times ever. In fact, the opposite is true, particularly in Europe – in spite of the financial crisis and other contemporary troubles. My grandparents’ generation can still remember a world war – hunger, expulsion, violence – whereas our physical wellbeing is more secure than ever before. Nevertheless, there seems to be a growing lack of acceptance that the world will always be risky and uncertain in spite of ever-greater security. The busy lives of today’s children are planned down to the very last detail, beginning at conception and including activities from birth onwards. And woe betide the parent who lets their child ride a bicycle without a helmet. My childhood was riskier and less planned, and my parents’ childhood even more so.

Perhaps nowadays people have the impression of being able to control and predict everything, and so they feel they have to do so. But this impression of predictability is, of course, an illusion. Which makes us all the more unsettled when a situation does not pan out as we expected.

Uncertainty in dealing with uncertainty – and its consequences

This uncertainty in dealing with uncertainty has become a problem in today’s technology-driven society. Our fears and anxieties are repeatedly roused and we fall into the trap of aimless activism. Who does not remember the apocalyptic scenarios of computers crashing because of the millennium bug? In the end, Y2K did not accidentally trigger a nuclear war, but a few slot machines did stop working. Not long afterwards, in the early 2000s, came BSE and ‘mad cow disease’. Two German ministers had to resign because they created a false sense of security. "German beef is safe," they said. And us? We just ate less beef until the infected cows had disappeared from the headlines. At the start of the bird flu scare, every dead bird made it on to the front pages, accompanied by men in protective suits. We spent millions of euros on vaccinations for swine flu, most of which were not used. Later there was the EHEC outbreak, and the authorities warned against eating salad.

Thankfully, humanity has survived all these threats relatively unscathed, despite the waves of fear that they provoked. That does not mean that they did not warrant the attention given to them or that they should not have been investigated. And the idea is not to portray people’s fears as ‘wrong’ or irrational. Instead we have to understand why people are more afraid of certain risks than of others, so that we can deal appropriately with these fears.

After all, fear itself can become a security risk, as was shown after the terrorist attacks of 11 September 2001. Around 3,000 people lost their lives in the attacks, including the 256 passengers who died in the hijacked aircraft. These events precipitated the so-called war on terror and an expansion of state surveillance that continues to threaten our civil liberties and whose magnitude is only just becoming evident thanks to recent revelations. But the attacks did not just cause deaths directly, they also did so indirectly. They spread fear and panic, which caused hordes of people to switch from aeroplanes to cars – with fatal consequences. As driving is much more dangerous than flying, there were around 1,600 more traffic deaths in the twelve months following 9/11 than in the previous years.

These examples illustrate how a flawed approach to uncertainty can lead to erroneous decisions, with dramatic consequences that even include avoidable deaths. So what can be done? Should we keep people away from decision making and outsource it to expert committees who then – with well-meaning paternalism – make the ‘right’ decisions for us? Absolutely not. For one thing, expert judgements can by no means always be relied upon, as has been seen time and time again in comparisons of predictions and actual outcomes in the worlds of politics, science, finance and medicine. Moreover, experts often do not share the same values and objectives as us. No, we have to take our own decisions into our own hands – and we have the ability to do so.

A toolbox for good decisions

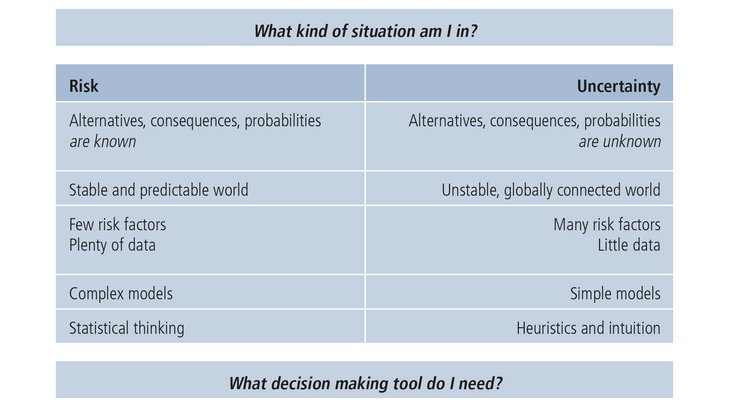

In order to make good decisions under uncertainty we first of all have to dispense with Leibniz’s dream that there is a universal tool for making the right decisions. Instead we need a tool box equipped with a range of decision-making strategies. It should contain approaches as different as chalk and cheese such as statistical thinking and gut feelings. Finally, we have to know what kind of uncertainty we are facing before we can select the appropriate tool.

If I am in a situation in which I know all the options and more or less know the probability of each potential outcome – we call this decision making under risk – then statistical thinking is what I need. This is often the case in medical decisions, for example when you are considering taking a particular drug or thinking about undergoing cancer screening. Based on randomised, controlled studies of large samples, you can obtain a relatively clear picture of how many people benefit from a particular medical measure and how many people suffer harm from it – and to what extent. This is where statistical analysis and complex calculations and models are the right tools.

In the vast majority of situations, however, I do not even know all the options I have, let alone the probability of various outcomes. We call this decision making under uncertainty. Here we have to dispense with statistical analysis and turn our attention to gut feelings and simple decision rules (‘heuristics’). We have to learn to ignore information so that we can make decisions faster, more economically and with greater accuracy. The point about greater accuracy may seem surprising. After all, we often hear that complex problems need complex solutions. In fact, the opposite is true: complex problems in uncertain situations actually call for simple decision-making strategies. The financial markets are a perfect example. Studies have shown that supposedly amateurish strategies such as ‘buy what you know’ and ‘spread your money evenly across XYZ options’ can prove more successful than following the recommendations of experts and complex, Nobel-prize-winning investment strategies. Similarly, in the case of experienced decision makers, snap judgements are often better than those that are made slowly and ostensibly with greater deliberation – and that is true in medicine, sport and among consumers.

Conclusion

Our world will always be an uncertain one. We have to free ourselves from the illusion that everything can be controlled and predicted, but without being petrified. It is still possible to make good decisions. You just have to remember that the more predictable the situation (‘risk’), the greater the need for statistical thinking and complex models; the more unpredictable the situation (‘uncertainty’), the greater the need for simple heuristics and intuition (Figure 1).

Figure 1: The art of good decision making: which tool do I need for which situation? (adapted from Gigerenzer, G. (2014). Risk savvy: How to make good decisions. New York: Viking.)

The art of good decision making lies in knowing when you are in what situation to be able to deftly apply the right decision-making tool for the job.

And it requires the courage not to avoid or postpone decisions but to boldly take control of them and assume responsibility for the choices you make.

About the author

Wolfgang Gaissmaier, born 1977, investigates decision making under uncertainty as full professor of social psychology and decision sciences at the University of Constance. Previously he was chief research scientist of the Harding Center for Risk Literacy at the Max Planck Institute for Human Development, Berlin, Germany. He received the Otto Hahn Medal for outstanding scientific achievements by the Max Planck Society and is fellow at the Young Academy of the Berlin-Brandenburg Academy of Sciences and Humanities and the German Academy of Sciences Leopoldina.

[Source: The text is taken from the book "The measurement of the risk". We thank the Union Investment Institutional GmbH for the kind permission of publication on RiskNET]